The article lists down two most commonly used folder structure for a Spring MVC/Hibernate based web application project. In fact, this folder structure could be used with any other MVC framework like Spring. Following are different two folder structures described later in this article:

- Eclipse-based Web Application (Dynamic Web Project)

- Maven-based Web Application Project

Eclipse-based Web Application (Dynamic Web Project)

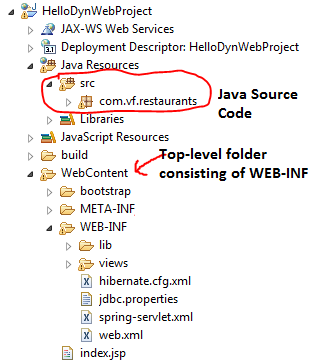

As per this Eclipse page, Dynamic web projects contain dynamic Java EE resources such as servlets, JSP files, filters, and associated metadata, in addition to static resources such as images and HTML files. Following is how the folder structure looks like on the disk:

- src

- packages (com.orgid.abc)

- WebContent

- CSS/JS Folders (Publicly accessible; DO NOT Put them within WEB-INF)

- META-INF (Manifest.mf)

- WEB-INF

- lib (Consists of Spring, Hibernate and dependent libraries)

- views (depends upon the configuration in spring-servlet.xml)

- web.xml

- spring-servlet.xml (Spring related configuration)

- hibernate.cfg.xml (Hibernate related configuration)

Following is the sample screenshot of how it looks within Eclipse:

Maven-based Web Application Project

Following is the standard folder structure one would find in many of the web applications. It is also the directory layout expected by Maven and the directory layout created by Maven. The details about this folder structure could be found on the Maven Apache page. The directory structure is primarily laid down based on two code groupings:

- src/main: Main build artifact consisting of Java code, configuration files, filters, scripts, application libraries etc. All these artifacts are placed within “src/main” and sub-folders as java, webapp, resources, filters, configs, scripts. It looks like following:”

- src/test: Test build artifact consisting of unit test code. The unit tests code is placed within folder such as “src/test/java”.

Following is the minimum folder structure based on above description that would be enough to run your hello world Spring MVC/Hibernate application:

- src/main/java: Consists of source code

- src/main/webapp: Consists of libraries, configuration files, resources etc.

- CSS/JS Folders (Publicly accessible; DO NOT put them within WEB-INF)

- META-INF (Manifest.mf)

- WEB-INF

- lib (Consists of Spring, Hibernate and dependent libraries)

- views (Depends upon the configuration in spring-servlet.xml)

- web.xml

- spring-servlet.xml (Spring related configuration)

- hibernate.cfg.xml (Hibernate related configuration)

- src/test/java: Consists of unit test code

If you are working on Maven based project, you could adopt the Maven-based folder structure. Build tools such as Gradle work like charm. However, Gradle could easily be configured to work with Eclipse-based dynamic web project folder structure. This will be discussed in later articles. Rookies could start with Eclipse-based folder structure.

'dev > spring framework' 카테고리의 다른 글

| 정상 로그 (0) | 2017.02.14 |

|---|---|

| Spring Legacy Project 에서 MVC Project가 안보일때 해결방법 (0) | 2017.01.04 |

111

'dev' 카테고리의 다른 글

| lorem ipsum (0) | 2017.04.13 |

|---|---|

| nexus ubunto (0) | 2017.03.27 |

| Anti-OOP: if를 피하는 법 (0) | 2017.02.01 |

| XML vs JSON (0) | 2017.01.26 |

| TDD 무엇을 테스트 할 것인가? (0) | 2017.01.23 |

Anti-OOP: if를 피하는 법

http://meetup.toast.com/posts/94

'dev' 카테고리의 다른 글

| nexus ubunto (0) | 2017.03.27 |

|---|---|

| ISDP (0) | 2017.02.02 |

| XML vs JSON (0) | 2017.01.26 |

| TDD 무엇을 테스트 할 것인가? (0) | 2017.01.23 |

| 윈도우10 FTP서버 설치후 아웃바운드 방화벽 문제 (0) | 2017.01.02 |

정리

뭘써야하는가?

Radis : in-memory

Cassandra : equal 쿼리에서 고성능, range 쿼리 불가

Hbase : equal, range 쿼리, row key partion 스캔 가능

MongoDB : 우리 DB를 모두 document화, 인덱스설정, 애자일한 특성 (prototype 구현시)

, 샤드키 선정 신경 많이 써야함. 샤드키 변경불가능함

때에 따라서 비정규화와 정규화를 혼용

MongoDB : 처음에 정규화 → 비정규화

'dev > NoSQL 모델링' 카테고리의 다른 글

| mongodb GUI client (0) | 2017.05.09 |

|---|---|

| 초간단 nasdaq 데이터분석 (0) | 2017.04.27 |

| 카산드라 (0) | 2017.01.25 |

| NoSql 모델링 기법 (0) | 2017.01.25 |

| Shard Cluster (1) | 2017.01.25 |

XML vs JSON

|

XML |

JSON |

SIZE |

↑ |

↓ |

Web App 접근성 |

↓ |

↑ |

Schema |

○ xsn |

△ |

'dev' 카테고리의 다른 글

| nexus ubunto (0) | 2017.03.27 |

|---|---|

| ISDP (0) | 2017.02.02 |

| Anti-OOP: if를 피하는 법 (0) | 2017.02.01 |

| TDD 무엇을 테스트 할 것인가? (0) | 2017.01.23 |

| 윈도우10 FTP서버 설치후 아웃바운드 방화벽 문제 (0) | 2017.01.02 |

카산드라

[cas@s1 ~]$ ./cassandra/bin/cqlsh

Connected to Test Cluster at localhost:9160.

[cqlsh 4.1.1 | Cassandra 2.0.13 | CQL spec 3.1.1 | Thrift protocol 19.39.0]

Use HELP for help.

cqlsh> use testdb

...

...

...

... ;

cqlsh:testdb>

cqlsh:testdb>

cqlsh:testdb>

cqlsh:testdb> CREATE TABLE products (

... productid int,

... productname varchar,

... price int,

... PRIMARY KEY (productid)

... );

package com.multi.cas.jdbc1;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.PreparedStatement;

public class InsertBulkData {

public static void main(String[] args) throws Exception {

Class.forName("org.apache.cassandra.cql.jdbc.CassandraDriver");

//Connection con = DriverManager.getConnection("jdbc:cassandra://localhost:9160/testdb");

Connection con = DriverManager.getConnection("jdbc:cassandra://192.168.56.101:9160/testdb");

String sql = "INSERT INTO products (productid, productname, price) VALUES (?, ?, ?)";

PreparedStatement pstmt = con.prepareStatement(sql);

for (int i=1; i <= 3000; i++) {

pstmt.setInt(1, i);

pstmt.setString(2, "아이패드" + i);

pstmt.setInt(3, 1000+(500*(i % 10)));

pstmt.executeUpdate();

}

pstmt.close();

con.close();

System.out.println("Row 생성 완료!!");

}

}

cqlsh:testdb>

cqlsh:testdb>

cqlsh:testdb> CREATE INDEX idx_price ON products(price);

cqlsh:testdb>

cqlsh:testdb>

cqlsh:testdb> SELECT * FROM products WHERE productid IN (1,2,3,4,5,6,7,8,9,10);

productid | price | productname

-----------+-------+-------------

1 | 1500 | 아이패드1

2 | 2000 | 아이패드2

3 | 2500 | 아이패드3

4 | 3000 | 아이패드4

5 | 3500 | 아이패드5

6 | 4000 | 아이패드6

7 | 4500 | 아이패드7

8 | 5000 | 아이패드8

9 | 5500 | 아이패드9

10 | 1000 | 아이패드10

(10 rows)

cqlsh:testdb> SELECT * FROM products WHERE productid=1;

productid | price | productname

-----------+-------+-------------

1 | 1500 | 아이패드1

(1 rows)

cqlsh:testdb> SELECT * FROM products WHERE productid IN (1,2,3,4,5,6,7,8,9,10) AND price = 3000;

Bad Request: Select on indexed columns and with IN clause for the PRIMARY KEY are not supported

cqlsh:testdb> SELECT * FROM products WHERE price = 3000 LIMIT 10;

productid | price | productname

-----------+-------+--------------

1584 | 3000 | 아이패드1584

114 | 3000 | 아이패드114

2744 | 3000 | 아이패드2744

2524 | 3000 | 아이패드2524

744 | 3000 | 아이패드744

2074 | 3000 | 아이패드2074

2354 | 3000 | 아이패드2354

214 | 3000 | 아이패드214

1224 | 3000 | 아이패드1224

144 | 3000 | 아이패드144

(10 rows)

cqlsh:testdb>

CREATE TABLE products2 ( productid int, type varchar,

productname varchar, price int,

PRIMARY KEY (type, productid)

);

UPDATE products2 SET productname='맥북에어2' WHERE type='C';

에러 발생 : primary key의 일부분인 productid에 대해 EQ 연산이 포함되지 않았음.

=> 멀티update 허용 X

=> 한 row에서 여러개 update안됨

카산드라 모델링은 힘들다

요구사항이 바뀌어야 하는 상황이면 미침.

'dev > NoSQL 모델링' 카테고리의 다른 글

| 초간단 nasdaq 데이터분석 (0) | 2017.04.27 |

|---|---|

| 정리 (0) | 2017.01.26 |

| NoSql 모델링 기법 (0) | 2017.01.25 |

| Shard Cluster (1) | 2017.01.25 |

| Replica set (0) | 2017.01.25 |

NoSql 모델링 기법

정렬기능 들어가면 카산드라는 OUT

스키마가 자주 바뀌는 경우 : HBASE, 카산드라 OUT

Cassandra : java 로 구현

Cassandra의 c++구현 : scylla, http://www.scylladb.com/

시계열에 강한 db도 있다

타밀어

https://en.wikipedia.org/wiki/Time_series_database

[계층적모델링]

Tree Aggregation

p300

'dev > NoSQL 모델링' 카테고리의 다른 글

| 정리 (0) | 2017.01.26 |

|---|---|

| 카산드라 (0) | 2017.01.25 |

| Shard Cluster (1) | 2017.01.25 |

| Replica set (0) | 2017.01.25 |

| 4. Document Database (0) | 2017.01.24 |

Shard Cluster

mongodb는 샤딩이 필수가 아님

[mongodb@s1 mongodb]$ ./bin/mongos --configdb s2:20000,s3:20000,s4:20000 --chunkSize 1 --port 27017

2017-01-25T10:51:52.972+0900 [mongosMain] MongoS version 2.6.9 starting: pid=10689 port=27017 64-bit host=s1.test.com (--help for usage)

2017-01-25T10:51:52.973+0900 [mongosMain] db version v2.6.9

2017-01-25T10:51:52.973+0900 [mongosMain] git version: df313bc75aa94d192330cb92756fc486ea604e64

2017-01-25T10:51:52.973+0900 [mongosMain] build info: Linux build20.nj1.10gen.cc 2.6.32-431.3.1.el6.x86_64 #1 SMP Fri Jan 3 21:39:27 UTC 2014 x86_64 BOOST_LIB_VERSION=1_49

2017-01-25T10:51:52.973+0900 [mongosMain] allocator: tcmalloc

2017-01-25T10:51:52.973+0900 [mongosMain] options: { net: { port: 27017 }, sharding: { chunkSize: 1, configDB: "s2:20000,s3:20000,s4:20000" } }

2017-01-25T10:51:53.203+0900 [mongosMain] SyncClusterConnection connecting to [s2:20000]

2017-01-25T10:51:53.203+0900 [mongosMain] SyncClusterConnection connecting to [s3:20000]

2017-01-25T10:51:53.205+0900 [mongosMain] SyncClusterConnection connecting to [s4:20000]

2017-01-25T10:51:53.373+0900 [mongosMain] scoped connection to s2:20000,s3:20000,s4:20000 not being returned to the pool

2017-01-25T10:51:53.583+0900 [mongosMain] SyncClusterConnection connecting to [s2:20000]

2017-01-25T10:51:53.584+0900 [LockPinger] creating distributed lock ping thread for s2:20000,s3:20000,s4:20000 and process s1.test.com:27017:1485309113:1804289383 (sleeping for 30000ms)

2017-01-25T10:51:53.584+0900 [LockPinger] SyncClusterConnection connecting to [s2:20000]

2017-01-25T10:51:53.585+0900 [mongosMain] SyncClusterConnection connecting to [s3:20000]

2017-01-25T10:51:53.586+0900 [mongosMain] SyncClusterConnection connecting to [s4:20000]

2017-01-25T10:51:53.586+0900 [LockPinger] SyncClusterConnection connecting to [s3:20000]

2017-01-25T10:51:53.588+0900 [LockPinger] SyncClusterConnection connecting to [s4:20000]

2017-01-25T10:51:54.283+0900 [LockPinger] cluster s2:20000,s3:20000,s4:20000 pinged successfully at Wed Jan 25 10:51:53 2017 by distributed lock pinger 's2:20000,s3:20000,s4:20000/s1.test.com:27017:1485309113:1804289383', sleeping for 30000ms

2017-01-25T10:51:54.491+0900 [mongosMain] distributed lock 'configUpgrade/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804b9967a58ec329a19f3

2017-01-25T10:51:54.495+0900 [mongosMain] starting upgrade of config server from v0 to v5

2017-01-25T10:51:54.495+0900 [mongosMain] starting next upgrade step from v0 to v5

2017-01-25T10:51:54.495+0900 [mongosMain] about to log new metadata event: { _id: "s1.test.com-2017-01-25T01:51:54-588804ba967a58ec329a19f4", server: "s1.test.com", clientAddr: "N/A", time: new Date(1485309114495), what: "starting upgrade of config database", ns: "config.version", details: { from: 0, to: 5 } }

2017-01-25T10:51:54.666+0900 [mongosMain] creating WriteBackListener for: s2:20000 serverID: 000000000000000000000000

2017-01-25T10:51:54.714+0900 [mongosMain] creating WriteBackListener for: s3:20000 serverID: 000000000000000000000000

2017-01-25T10:51:54.787+0900 [mongosMain] creating WriteBackListener for: s4:20000 serverID: 000000000000000000000000

2017-01-25T10:51:55.071+0900 [mongosMain] writing initial config version at v5

2017-01-25T10:51:55.160+0900 [mongosMain] about to log new metadata event: { _id: "s1.test.com-2017-01-25T01:51:55-588804bb967a58ec329a19f6", server: "s1.test.com", clientAddr: "N/A", time: new Date(1485309115160), what: "finished upgrade of config database", ns: "config.version", details: { from: 0, to: 5 } }

2017-01-25T10:51:55.242+0900 [mongosMain] upgrade of config server to v5 successful

2017-01-25T10:51:55.369+0900 [mongosMain] distributed lock 'configUpgrade/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:51:56.240+0900 [mongosMain] scoped connection to s2:20000,s3:20000,s4:20000 not being returned to the pool

2017-01-25T10:51:56.242+0900 [mongosMain] waiting for connections on port 27017

2017-01-25T10:51:56.276+0900 [Balancer] about to contact config servers and shards

2017-01-25T10:51:56.276+0900 [Balancer] SyncClusterConnection connecting to [s2:20000]

2017-01-25T10:51:56.277+0900 [Balancer] SyncClusterConnection connecting to [s3:20000]

2017-01-25T10:51:56.278+0900 [Balancer] SyncClusterConnection connecting to [s4:20000]

2017-01-25T10:51:56.280+0900 [Balancer] config servers and shards contacted successfully

2017-01-25T10:51:56.280+0900 [Balancer] balancer id: s1.test.com:27017 started at Jan 25 10:51:56

2017-01-25T10:51:56.283+0900 [Balancer] SyncClusterConnection connecting to [s2:20000]

2017-01-25T10:51:56.284+0900 [Balancer] SyncClusterConnection connecting to [s3:20000]

2017-01-25T10:51:56.285+0900 [Balancer] SyncClusterConnection connecting to [s4:20000]

2017-01-25T10:51:56.761+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804bc967a58ec329a19f8 <- 분산락 획득

2017-01-25T10:51:56.899+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:03.123+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804c2967a58ec329a19f9

2017-01-25T10:52:03.226+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:09.534+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804c9967a58ec329a19fa

2017-01-25T10:52:09.673+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:15.885+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804cf967a58ec329a19fb

2017-01-25T10:52:16.023+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:22.322+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804d6967a58ec329a19fc

2017-01-25T10:52:22.461+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:24.495+0900 [LockPinger] cluster s2:20000,s3:20000,s4:20000 pinged successfully at Wed Jan 25 10:52:24 2017 by distributed lock pinger 's2:20000,s3:20000,s4:20000/s1.test.com:27017:1485309113:1804289383', sleeping for 30000ms

2017-01-25T10:52:28.700+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804dc967a58ec329a19fd

2017-01-25T10:52:28.838+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:35.139+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804e2967a58ec329a19fe

2017-01-25T10:52:35.278+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:41.543+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804e9967a58ec329a19ff

2017-01-25T10:52:41.683+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:47.911+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804ef967a58ec329a1a00

2017-01-25T10:52:48.014+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:54.274+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' acquired, ts : 588804f6967a58ec329a1a01

2017-01-25T10:52:54.345+0900 [Balancer] distributed lock 'balancer/s1.test.com:27017:1485309113:1804289383' unlocked.

2017-01-25T10:52:54.759+0900 [LockPinger] cluster s2:20000,s3:20000,s4:20000 pinged successfully at Wed Jan 25 10:52:54 2017 by distributed lock pinger 's2:20000,s3:20000,s4:20000/s1.test.com:27017:1485309113:1804289383', sleeping for 30000ms

'dev > NoSQL 모델링' 카테고리의 다른 글

| 카산드라 (0) | 2017.01.25 |

|---|---|

| NoSql 모델링 기법 (0) | 2017.01.25 |

| Replica set (0) | 2017.01.25 |

| 4. Document Database (0) | 2017.01.24 |

| 3. Column Family Database (0) | 2017.01.24 |

Replica set

Replica set 는 홀수개를 운영해야함

- 노드가 짝수개밖에 없으면, 포트번호로 추가지정가능

Primary :

Secondary :

Arbiter : heart bit 역할, 부하거의 없음

cd ~/mongodb

rm -rf data/db

mkdir -p data/db

./bin/mongod --dbpath data/db --replSet test1 --oplogSize 1

C:\mongodb\bin>mongo.exe s1:27017

2017-01-25T09:36:31.983+0900 Hotfix KB2731284 or later update is not installed, will zero-out data files

MongoDB shell version: 2.6.12

connecting to: s1:27017/test

> use admin

switched to db admin

> rs.initiate()

{

"info2" : "no configuration explicitly specified -- making one",

"me" : "s1.test.com:27017",

"info" : "Config now saved locally. Should come online in about a minute.",

"ok" : 1

}

>

test1:PRIMARY>

test1:PRIMARY> rs.con

rs.conf( rs.config( rs.constructor

test1:PRIMARY> rs.conf()

{

"_id" : "test1",

"version" : 1,

"members" : [

{

"_id" : 0,

"host" : "s1.test.com:27017"

}

]

}

test1:PRIMARY> cfg = rs.conf()

{

"_id" : "test1",

"version" : 1,

"members" : [

{

"_id" : 0,

"host" : "s1.test.com:27017"

}

]

}

test1:PRIMARY> cfg.members[0].host = "s1:27017";

s1:27017

test1:PRIMARY> rs.reconfig(cfg);

2017-01-25T09:40:17.336+0900 DBClientCursor::init call() failed

2017-01-25T09:40:17.338+0900 trying reconnect to s1:27017 (192.168.56.101) failed

2017-01-25T09:40:17.340+0900 reconnect s1:27017 (192.168.56.101) ok

reconnected to server after rs command (which is normal)

test1:PRIMARY> rs.add("s2:27017");

{ "ok" : 1 }

test1:PRIMARY> rs.addArb("s3:27017");

{ "ok" : 1 }

test1:PRIMARY> rs.conf()

{

"_id" : "test1",

"version" : 4,

"members" : [

{

"_id" : 0,

"host" : "s1:27017"

},

{

"_id" : 1,

"host" : "s2:27017"

},

{

"_id" : 2,

"host" : "s3:27017",

"arbiterOnly" : true

}

]

}

test1:PRIMARY> rs.status()

{

"set" : "test1",

"date" : ISODate("2017-01-25T00:41:16Z"),

"myState" : 1,

"members" : [

{

"_id" : 0,

"name" : "s1:27017",

"health" : 1,

"state" : 1,

"stateStr" : "PRIMARY",

"uptime" : 613,

"optime" : Timestamp(1485304834, 1),

"optimeDate" : ISODate("2017-01-25T00:40:34Z"),

"electionTime" : Timestamp(1485304638, 1),

"electionDate" : ISODate("2017-01-25T00:37:18Z"),

"self" : true

},

{

"_id" : 1,

"name" : "s2:27017",

"health" : 1,

"state" : 2,

"stateStr" : "SECONDARY",

"uptime" : 43,

"optime" : Timestamp(1485304834, 1),

"optimeDate" : ISODate("2017-01-25T00:40:34Z"),

"lastHeartbeat" : ISODate("2017-01-25T00:41:15Z"),

"lastHeartbeatRecv" : ISODate("2017-01-25T00:41:16Z"),

"pingMs" : 0,

"syncingTo" : "s1:27017"

},

{

"_id" : 2,

"name" : "s3:27017",

"health" : 1,

"state" : 7,

"stateStr" : "ARBITER",

"uptime" : 42, <= Arbiter는 OPtime 없음

"lastHeartbeat" : ISODate("2017-01-25T00:41:16Z"),

"lastHeartbeatRecv" : ISODate("2017-01-25T00:41:16Z"),

"pingMs" : 0

}

],

"ok" : 1

}

test1:PRIMARY>

use test

for (var i=0; i < 10000; i++) {

db.user.save({name:"john"+i, age:20 + (i%10) })

}

C:\mongodb\bin>mongo.exe s2:27017

2017-01-25T09:44:49.448+0900 Hotfix KB2731284 or later update is not installed, will zero-out data files

MongoDB shell version: 2.6.12

connecting to: s2:27017/test

test1:SECONDARY>

test1:SECONDARY> use test

switched to db test

test1:SECONDARY> db.user.findOne()

2017-01-25T09:45:12.489+0900 error: { "$err" : "not master and slaveOk=false", "code" : 13435 } at src/mongo/shell/query.js:131

test1:SECONDARY> rs.slaveOk();

test1:SECONDARY> db.user.findOne()

{

"_id" : ObjectId("5887f4bc514b2dcdfa1a4554"),

"name" : "john0",

"age" : 20

}

test1:SECONDARY> db.user.insert({name :"obama", age:60});

WriteResult({ "writeError" : { "code" : undefined, "errmsg" : "not master" } })

test1:SECONDARY>

[Oplog]

test1:PRIMARY> use test

switched to db test

test1:PRIMARY> db.user.insert({ name :'gdhong', age:20})

WriteResult({ "nInserted" : 1 })

test1:PRIMARY> db.user.update({name:'gdhong'}, { $set : { age:30 }})

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

test1:PRIMARY>

test1:PRIMARY> use local

switched to db local

test1:PRIMARY>

test1:PRIMARY> db.oplog.rs.find().sort({ts:-1}).limit(2).pretty();

{

"ts" : Timestamp(1485305312, 1),

"h" : NumberLong("-2545699453346556688"),

"v" : 2,

"op" : "u",

"ns" : "test.user",

"o2" : {

"_id" : ObjectId("5887f5df514b2dcdfa1a6c64")

},

"o" : {

"$set" : {

"age" : 30

}

}

}

{

"ts" : Timestamp(1485305311, 1),

"h" : NumberLong("-4829261684578901723"),

"v" : 2,

"op" : "i",

"ns" : "test.user",

"o" : {

"_id" : ObjectId("5887f5df514b2dcdfa1a6c64"),

"name" : "gdhong",

"age" : 20

}

}

[Primary 죽이기]

2017-01-25T09:52:21.070+0900 [initandlisten] connection accepted from 192.168.56.103:52089 #57 (5 connections now open)

2017-01-25T09:52:32.578+0900 [conn56] end connection 192.168.56.102:57793 (4 connections now open)

2017-01-25T09:52:32.579+0900 [initandlisten] connection accepted from 192.168.56.102:57795 #58 (5 connections now open)

^C2017-01-25T09:52:45.426+0900 [signalProcessingThread] got signal 2 (Interrupt), will terminate after current cmd ends

2017-01-25T09:52:45.427+0900 [signalProcessingThread] now exiting

2017-01-25T09:52:45.427+0900 [signalProcessingThread] dbexit:

2017-01-25T09:52:45.427+0900 [signalProcessingThread] shutdown: going to close listening sockets...

2017-01-25T09:52:45.427+0900 [signalProcessingThread] closing listening socket: 7

2017-01-25T09:52:45.427+0900 [signalProcessingThread] closing listening socket: 8

2017-01-25T09:52:45.427+0900 [signalProcessingThread] removing socket file: /tmp/mongodb-27017.sock

2017-01-25T09:52:45.428+0900 [signalProcessingThread] shutdown: going to flush diaglog...

2017-01-25T09:52:45.428+0900 [signalProcessingThread] shutdown: going to close sockets...

2017-01-25T09:52:45.428+0900 [signalProcessingThread] shutdown: waiting for fs preallocator...

2017-01-25T09:52:45.428+0900 [signalProcessingThread] shutdown: lock for final commit...

2017-01-25T09:52:45.428+0900 [signalProcessingThread] shutdown: final commit...

2017-01-25T09:52:45.430+0900 [conn2] end connection 192.168.56.1:49553 (4 connections now open)

2017-01-25T09:52:45.430+0900 [conn11] end connection 192.168.56.102:57746 (4 connections now open)

2017-01-25T09:52:45.431+0900 [conn57] end connection 192.168.56.103:52089 (4 connections now open)

2017-01-25T09:52:45.431+0900 [conn58] end connection 192.168.56.102:57795 (4 connections now open)

2017-01-25T09:52:45.433+0900 [signalProcessingThread] shutdown: closing all files...

2017-01-25T09:52:45.434+0900 [signalProcessingThread] closeAllFiles() finished

2017-01-25T09:52:45.434+0900 [signalProcessingThread] journalCleanup...

2017-01-25T09:52:45.434+0900 [signalProcessingThread] removeJournalFiles

2017-01-25T09:52:45.473+0900 [signalProcessingThread] shutdown: removing fs lock...

2017-01-25T09:52:45.493+0900 [signalProcessingThread] dbexit: really exiting now

다시 살리면

[mongodb@s1 mongodb]$ ./bin/mongod --dbpath data/db --replSet test1

2017-01-25T09:55:18.797+0900 [initandlisten] MongoDB starting : pid=9101 port=27017 dbpath=data/db 64-bit host=s1.test.com

2017-01-25T09:55:18.812+0900 [initandlisten] db version v2.6.9

2017-01-25T09:55:18.813+0900 [initandlisten] git version: df313bc75aa94d192330cb92756fc486ea604e64

2017-01-25T09:55:18.813+0900 [initandlisten] build info: Linux build20.nj1.10gen.cc 2.6.32-431.3.1.el6.x86_64 #1 SMP Fri Jan 3 21:39:27 UTC 2014 x86_64 BOOST_LIB_VERSION=1_49

2017-01-25T09:55:18.813+0900 [initandlisten] allocator: tcmalloc

2017-01-25T09:55:18.813+0900 [initandlisten] options: { replication: { replSet: "test1" }, storage: { dbPath: "data/db" } }

2017-01-25T09:55:18.841+0900 [initandlisten] journal dir=data/db/journal

2017-01-25T09:55:18.841+0900 [initandlisten] recover : no journal files present, no recovery needed

2017-01-25T09:55:18.864+0900 [initandlisten] waiting for connections on port 27017

2017-01-25T09:55:18.974+0900 [rsStart] replSet I am s1:27017

2017-01-25T09:55:18.974+0900 [rsStart] replSet STARTUP2

2017-01-25T09:55:18.974+0900 [rsSync] replSet SECONDARY

2017-01-25T09:55:18.975+0900 [rsMgr] replSet can't see a majority, will not try to elect self

2017-01-25T09:55:18.977+0900 [rsHealthPoll] replset info s2:27017 thinks that we are down

2017-01-25T09:55:18.977+0900 [rsHealthPoll] replSet member s2:27017 is up

2017-01-25T09:55:18.977+0900 [rsHealthPoll] replSet member s2:27017 is now in state PRIMARY

2017-01-25T09:55:18.981+0900 [rsHealthPoll] replset info s3:27017 thinks that we are down

2017-01-25T09:55:18.981+0900 [rsHealthPoll] replSet member s3:27017 is up

2017-01-25T09:55:18.981+0900 [rsHealthPoll] replSet member s3:27017 is now in state ARBITER

2017-01-25T09:55:19.456+0900 [initandlisten] connection accepted from 192.168.56.102:58213 #1 (1 connection now open)

2017-01-25T09:55:20.133+0900 [initandlisten] connection accepted from 192.168.56.103:52538 #2 (2 connections now open)

2017-01-25T09:55:20.150+0900 [conn2] end connection 192.168.56.103:52538 (1 connection now open)

2017-01-25T09:55:20.150+0900 [initandlisten] connection accepted from 192.168.56.103:52539 #3 (3 connections now open)

2017-01-25T09:55:20.456+0900 [conn1] end connection 192.168.56.102:58213 (1 connection now open)

2017-01-25T09:55:20.457+0900 [initandlisten] connection accepted from 192.168.56.102:58214 #4 (2 connections now open)

2017-01-25T09:55:23.976+0900 [rsBackgroundSync] replSet syncing to: s2:27017

2017-01-25T09:55:23.978+0900 [rsBackgroundSync] replset setting syncSourceFeedback to s2:27017

2017-01-25T09:55:28.462+0900 [conn4] end connection 192.168.56.102:58214 (1 connection now open)

2017-01-25T09:55:28.463+0900 [initandlisten] connection accepted from 192.168.56.102:58216 #5 (2 connections now open)

2017-01-25T09:55:33.806+0900 [initandlisten] connection accepted from 192.168.56.1:49562 #6 (3 connections now open)

2017-01-25T09:55:46.169+0900 [conn3] end connection 192.168.56.103:52539 (2 connections now open)

2017-01-25T09:55:46.169+0900 [initandlisten] connection accepted from 192.168.56.103:52541 #7 (3 connections now open)

2017-01-25T09:55:58.482+0900 [conn5] end connection 192.168.56.102:58216 (2 connections now open)

2017-01-25T09:55:58.483+0900 [initandlisten] connection accepted from 192.168.56.102:58218 #8 (3 connections now open)

C:\mongodb\bin>mongo.exe s1:27017

2017-01-25T09:55:33.809+0900 Hotfix KB2731284 or later update is not installed, will zero-out data files

MongoDB shell version: 2.6.12

connecting to: s1:27017/test

test1:SECONDARY>

test1:SECONDARY> db.isMaster()

{

"setName" : "test1",

"setVersion" : 4,

"ismaster" : false,

"secondary" : true,

"hosts" : [

"s1:27017",

"s2:27017"

],

"arbiters" : [

"s3:27017"

],

"primary" : "s2:27017",

"me" : "s1:27017",

"maxBsonObjectSize" : 16777216,

"maxMessageSizeBytes" : 48000000,

"maxWriteBatchSize" : 1000,

"localTime" : ISODate("2017-01-25T01:01:12.168Z"),

"maxWireVersion" : 2,

"minWireVersion" : 0,

"ok" : 1

}

'dev > NoSQL 모델링' 카테고리의 다른 글

| NoSql 모델링 기법 (0) | 2017.01.25 |

|---|---|

| Shard Cluster (1) | 2017.01.25 |

| 4. Document Database (0) | 2017.01.24 |

| 3. Column Family Database (0) | 2017.01.24 |

| NO SQL을 선택하는 이유 (0) | 2017.01.23 |

4. Document Database

mongoDB (오픈소스)

수정 (DB내부엔진 바꿈,프렉탈인덱스,트렌젝션기능추가) -> TokuMX

TokuMX

https://www.percona.com/blog/2013/10/31/introducing-tokumx-transactions-for-mongodb-applications/

로깅분석

1.

Fluentd로 apache log를 MongoDB에 저장하기

http://www.sjune.net/archives/1164

2.

flume mongodb

http://tomining.tistory.com/58

MongoDB 스토리지엔진 종류

1. MMapV1 : 읽기전용

2. WiredTiger : 쓰기에 적합

update시 단편화문제발생

document(row한개) 크기가 16M로 제한

tombstone 으로 인해 주기적인 compaction 일어남

특히 MMapV1에서 발생

drwxrwxr-x. 5 mongodb mongodb 4096 2015-04-30 09:52 mongodb-linux-x86_64-2.6.9

-rwxr--r--. 1 mongodb mongodb 412 2015-04-30 09:28 startrs-all.sh <--replica set

-rw-r--r--. 1 mongodb mongodb 494 2015-04-23 23:55 startrs-all.sh~

-rwxr--r--. 1 mongodb mongodb 1104 2015-04-30 09:29 startsh-all.sh <---sharding

-rw-r--r--. 1 mongodb mongodb 1266 2015-04-24 00:18 startsh-all.sh~

-rw-rw-r--. 1 mongodb mongodb 27 2015-04-24 00:23 stop-mongos.js

-rwxr--r--. 1 mongodb mongodb 213 2015-04-23 23:56 stoprs-all.sh

-rw-rw-r--. 1 mongodb mongodb 213 2015-04-23 23:56 stoprs-all.sh~

-rwxr--r--. 1 mongodb mongodb 537 2015-04-24 00:27 stopsh-all.sh

-rwxr--r--. 1 mongodb mongodb 35 2015-04-24 00:10 test.sh

drwxr-xr-x. 2 mongodb mongodb 4096 2013-09-10 19:20 공개

drwxr-xr-x. 2 mongodb mongodb 4096 2015-04-30 09:48 다운로드

drwxr-xr-x. 2 mongodb mongodb 4096 2013-09-10 19:20 문서

drwxr-xr-x. 2 mongodb mongodb 4096 2013-09-10 19:25 바탕화면

drwxr-xr-x. 2 mongodb mongodb 4096 2013-09-10 19:20 비디오

drwxr-xr-x. 2 mongodb mongodb 4096 2013-09-10 19:20 사진

drwxr-xr-x. 2 mongodb mongodb 4096 2013-09-10 19:20 음악

drwxr-xr-x. 2 mongodb mongodb 4096 2013-09-10 19:20 템플릿

[mongodb@s1 ~]$

[MongoDB 윈도우 설치]

https://www.mongodb.com/download-center#previous

custom

[server]

[mongodb@s1 data]$ ll

합계 12

drwxrwxr-x. 2 mongodb mongodb 4096 2015-04-30 09:48 config

drwxrwxr-x. 4 mongodb mongodb 4096 2015-04-30 09:57 rs

drwxrwxr-x. 2 mongodb mongodb 4096 2015-04-30 09:48 sh

[mongodb@s1 data]$ cd ..

[mongodb@s1 mongodb]$ mkdir -p data/db

[mongodb@s1 mongodb]$ ll

합계 72

-rw-r--r--. 1 mongodb mongodb 34520 2015-03-23 23:49 GNU-AGPL-3.0

-rw-r--r--. 1 mongodb mongodb 1359 2015-03-23 23:49 README

-rw-r--r--. 1 mongodb mongodb 17793 2015-03-23 23:49 THIRD-PARTY-NOTICES

drwxrwxr-x. 2 mongodb mongodb 4096 2015-04-30 09:48 bin

drwxrwxr-x. 6 mongodb mongodb 4096 2017-01-24 14:16 data

drwxrwxr-x. 5 mongodb mongodb 4096 2015-04-30 09:52 logs

[mongodb@s1 mongodb]$ ./bin/mongod --dbpath data/db --port 27017

2017-01-24T14:16:56.125+0900 [initandlisten] MongoDB starting : pid=7449 port=27017 dbpath=data/db 64-bit host=s1.test.com

2017-01-24T14:16:56.126+0900 [initandlisten] db version v2.6.9

2017-01-24T14:16:56.127+0900 [initandlisten] git version: df313bc75aa94d192330cb92756fc486ea604e64

2017-01-24T14:16:56.127+0900 [initandlisten] build info: Linux build20.nj1.10gen.cc 2.6.32-431.3.1.el6.x86_64 #1 SMP Fri Jan 3 21:39:27 UTC 2014 x86_64 BOOST_LIB_VERSION=1_49

2017-01-24T14:16:56.127+0900 [initandlisten] allocator: tcmalloc

2017-01-24T14:16:56.127+0900 [initandlisten] options: { net: { port: 27017 }, storage: { dbPath: "data/db" } }

2017-01-24T14:16:56.171+0900 [initandlisten] journal dir=data/db/journal

2017-01-24T14:16:56.171+0900 [initandlisten] recover : no journal files present, no recovery needed

2017-01-24T14:16:58.138+0900 [initandlisten] preallocateIsFaster=true 10.54

2017-01-24T14:17:00.585+0900 [initandlisten] preallocateIsFaster=true 7.26

2017-01-24T14:17:03.648+0900 [initandlisten] preallocateIsFaster=true 11.68

2017-01-24T14:17:03.648+0900 [initandlisten] preallocateIsFaster check took 7.477 secs

2017-01-24T14:17:03.648+0900 [initandlisten] preallocating a journal file data/db/journal/prealloc.0

2017-01-24T14:17:06.274+0900 [initandlisten] File Preallocator Progress: 178257920/1073741824 16%

2017-01-24T14:17:09.543+0900 [initandlisten] File Preallocator Progress: 220200960/1073741824 20%

2017-01-24T14:17:13.312+0900 [initandlisten] File Preallocator Progress: 272629760/1073741824 25%

2017-01-24T14:17:16.120+0900 [initandlisten] File Preallocator Progress: 325058560/1073741824 30%

2017-01-24T14:17:19.078+0900 [initandlisten] File Preallocator Progress: 367001600/1073741824 34%

2017-01-24T14:17:22.826+0900 [initandlisten] File Preallocator Progress: 429916160/1073741824 40%

2017-01-24T14:17:25.598+0900 [initandlisten] File Preallocator Progress: 471859200/1073741824 43%

2017-01-24T14:17:28.308+0900 [initandlisten] File Preallocator Progress: 524288000/1073741824 48%

2017-01-24T14:17:31.689+0900 [initandlisten] File Preallocator Progress: 555745280/1073741824 51%

2017-01-24T14:17:34.126+0900 [initandlisten] File Preallocator Progress: 597688320/1073741824 55%

2017-01-24T14:17:37.166+0900 [initandlisten] File Preallocator Progress: 650117120/1073741824 60%

2017-01-24T14:17:40.235+0900 [initandlisten] File Preallocator Progress: 702545920/1073741824 65%

2017-01-24T14:17:43.297+0900 [initandlisten] File Preallocator Progress: 744488960/1073741824 69%

2017-01-24T14:17:46.119+0900 [initandlisten] File Preallocator Progress: 796917760/1073741824 74%

2017-01-24T14:17:49.165+0900 [initandlisten] File Preallocator Progress: 838860800/1073741824 78%

2017-01-24T14:17:52.092+0900 [initandlisten] File Preallocator Progress: 891289600/1073741824 83%

2017-01-24T14:17:55.872+0900 [initandlisten] File Preallocator Progress: 954204160/1073741824 88%

2017-01-24T14:17:58.377+0900 [initandlisten] File Preallocator Progress: 1006632960/1073741824 93%

2017-01-24T14:18:01.616+0900 [initandlisten] File Preallocator Progress: 1059061760/1073741824 98%

2017-01-24T14:18:13.577+0900 [initandlisten] preallocating a journal file data/db/journal/prealloc.1

2017-01-24T14:18:16.652+0900 [initandlisten] File Preallocator Progress: 209715200/1073741824 19%

2017-01-24T14:18:19.132+0900 [initandlisten] File Preallocator Progress: 241172480/1073741824 22%

2017-01-24T14:18:22.079+0900 [initandlisten] File Preallocator Progress: 283115520/1073741824 26%

2017-01-24T14:18:26.163+0900 [initandlisten] File Preallocator Progress: 346030080/1073741824 32%

2017-01-24T14:18:29.537+0900 [initandlisten] File Preallocator Progress: 408944640/1073741824 38%

2017-01-24T14:18:32.146+0900 [initandlisten] File Preallocator Progress: 450887680/1073741824 41%

2017-01-24T14:18:35.092+0900 [initandlisten] File Preallocator Progress: 503316480/1073741824 46%

2017-01-24T14:18:38.321+0900 [initandlisten] File Preallocator Progress: 545259520/1073741824 50%

2017-01-24T14:18:41.095+0900 [initandlisten] File Preallocator Progress: 943718400/1073741824 87%

2017-01-24T14:18:44.374+0900 [initandlisten] File Preallocator Progress: 985661440/1073741824 91%

2017-01-24T14:18:47.297+0900 [initandlisten] File Preallocator Progress: 1038090240/1073741824 96%

2017-01-24T14:18:59.778+0900 [initandlisten] preallocating a journal file data/db/journal/prealloc.2

2017-01-24T14:19:02.814+0900 [initandlisten] File Preallocator Progress: 220200960/1073741824 20%

2017-01-24T14:19:05.201+0900 [initandlisten] File Preallocator Progress: 251658240/1073741824 23%

2017-01-24T14:19:08.728+0900 [initandlisten] File Preallocator Progress: 325058560/1073741824 30%

2017-01-24T14:19:11.209+0900 [initandlisten] File Preallocator Progress: 367001600/1073741824 34%

2017-01-24T14:19:14.466+0900 [initandlisten] File Preallocator Progress: 408944640/1073741824 38%

2017-01-24T14:19:17.531+0900 [initandlisten] File Preallocator Progress: 461373440/1073741824 42%

2017-01-24T14:19:20.547+0900 [initandlisten] File Preallocator Progress: 513802240/1073741824 47%

2017-01-24T14:19:24.037+0900 [initandlisten] File Preallocator Progress: 576716800/1073741824 53%

2017-01-24T14:19:27.904+0900 [initandlisten] File Preallocator Progress: 639631360/1073741824 59%

2017-01-24T14:19:31.266+0900 [initandlisten] File Preallocator Progress: 702545920/1073741824 65%

2017-01-24T14:19:34.062+0900 [initandlisten] File Preallocator Progress: 744488960/1073741824 69%

2017-01-24T14:19:37.130+0900 [initandlisten] File Preallocator Progress: 796917760/1073741824 74%

2017-01-24T14:19:40.110+0900 [initandlisten] File Preallocator Progress: 838860800/1073741824 78%

2017-01-24T14:19:43.639+0900 [initandlisten] File Preallocator Progress: 891289600/1073741824 83%

2017-01-24T14:19:46.081+0900 [initandlisten] File Preallocator Progress: 933232640/1073741824 86%

2017-01-24T14:19:49.477+0900 [initandlisten] File Preallocator Progress: 975175680/1073741824 90%

2017-01-24T14:19:54.710+0900 [initandlisten] allocating new ns file data/db/local.ns, filling with zeroes...

2017-01-24T14:19:54.913+0900 [FileAllocator] allocating new datafile data/db/local.0, filling with zeroes...

2017-01-24T14:19:54.913+0900 [FileAllocator] creating directory data/db/_tmp

2017-01-24T14:19:54.946+0900 [FileAllocator] done allocating datafile data/db/local.0, size: 64MB, took 0.009 secs

2017-01-24T14:19:55.005+0900 [initandlisten] build index on: local.startup_log properties: { v: 1, key: { _id: 1 }, name: "_id_", ns: "local.startup_log" }

2017-01-24T14:19:55.007+0900 [initandlisten] added index to empty collection

2017-01-24T14:19:55.019+0900 [initandlisten] command local.$cmd command: create { create: "startup_log", size: 10485760, capped: true } ntoreturn:1 keyUpdates:0 numYields:0 reslen:37 308ms

2017-01-24T14:19:55.028+0900 [initandlisten] waiting for connections on port 27017

[client]

C:\mongodb\bin>mongo s1:27017

2017-01-24T14:20:35.732+0900 Hotfix KB2731284 or later update is not installed,

will zero-out data files

MongoDB shell version: 2.6.12

connecting to: s1:27017/test

Welcome to the MongoDB shell.

For interactive help, type "help".

For more comprehensive documentation, see

http://docs.mongodb.org/

Questions? Try the support group

http://groups.google.com/group/mongodb-user

> show dbs

admin (empty)

local 0.078GB

> db

test

> use test

switched to db test

> db.createCollection("users")

{ "ok" : 1 }

> db.users.insert({name:"홍길동", userid:"gdhong", email:"gdhing@a.com"})

WriteResult({ "nInserted" : 1 })

> db.users.insert({name:"이몽룡", userid:"mrlee", email:"mrlee@a.com"})

WriteResult({ "nInserted" : 1 })

> db.users.find()

{ "_id" : ObjectId("5886e523f4b5739d5d8617bc"), "name" : "홍길동", "userid" : "gdhong", "email" : "gdhing@a.com" }

{ "_id" : ObjectId("5886e523f4b5739d5d8617bd"), "name" : "이몽룡", "userid" : "mrlee", "email" : "mrlee@a.com" }

> db.users.find()

_id <--- PK역할, 입력안하면 자동으로 주어진다 (절대중복되지 않는값)

> db.users.insert({_id:"chsung", name:"성춘향", userid:"mrlee", email:"mrlee@a.com

db.users.insert({_id:"chsung", name:"성춘향", userid:"mrlee", email:"mrlee@a.com"})

WriteResult({ "nInserted" : 1 })

> db.users.find()

{ "_id" : ObjectId("5886e523f4b5739d5d8617bc"), "name" : "홍길동", "userid" : "gdhong", "email" : "gdhing@a.com" }

{ "_id" : ObjectId("5886e523f4b5739d5d8617bd"), "name" : "이몽룡", "userid" : "mrlee", "email" : "mrlee@a.com" }

{ "_id" : "chsung" , "name" : "성춘향", "userid" : "mrlee", "email" : "mrlee@a.com" }

>

[실행]

db.users.update({_id:"chsung"}, {name:"최서원"})

// UPDATE users SET name='최서원' where _id='chsung'

[결과]

> db.users.update({_id:"chsung"}, {name:"최서원"})

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.users.find() "})

{ "_id" : ObjectId("5886e523f4b5739d5d8617bc"), "name" : "홍길동", "userid" : "gdhong", "email" : "gdhing@a.com" }

{ "_id" : ObjectId("5886e523f4b5739d5d8617bd"), "name" : "이몽룡", "userid" : "mrlee", "email" : "mrlee@a.com" }

{ "_id" : "chsung", "name" : "최서원" }

<= 나머지 컬럼은 날아감

[mongodb는 기본이 멀티업데이트가 아님]

> db.a1.insert({_id:1, name:"a", v:10})

WriteResult({ "nInserted" : 1 })

> db.a1.insert({_id:2, name:"a", v:10})

WriteResult({ "nInserted" : 1 })

> db.a1.insert({_id:3, name:"b", v:10})

WriteResult({ "nInserted" : 1 })

> db.a1.find()

{ "_id" : 1, "name" : "a", "v" : 10 }

{ "_id" : 2, "name" : "a", "v" : 10 }

{ "_id" : 3, "name" : "b", "v" : 10 }

> db.a1.update({name:"a"}, {$set:{v:20}})

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.a1.find()

{ "_id" : 1, "name" : "a", "v" : 20 }

{ "_id" : 2, "name" : "a", "v" : 10 }

{ "_id" : 3, "name" : "b", "v" : 10 }

> db.a1.update({name:"a"}, {$set:{v:40}}, {multi:true})

WriteResult({ "nMatched" : 2, "nUpserted" : 0, "nModified" : 2 })

> db.a1.find()

{ "_id" : 1, "name" : "a", "v" : 40 }

{ "_id" : 2, "name" : "a", "v" : 40 }

{ "_id" : 3, "name" : "b", "v" : 10 }

※ 주의 multi 수행하다가 중간에 실패나면 이전건을 롤백하지 않음.

트랜젝션을 쓰려면 TokuMX를 쓰면됨

[upsert]

테이블 미리 안만들어도됨

> show collections

a1

system.indexes

users

> db.pageviews.update({ _id : 'http://naver.com' }, { $inc : { hits : 1} }, { upsert:true });

WriteResult({

"nMatched" : 0,

"nUpserted" : 1,

"nModified" : 0,

"_id" : "http://naver.com"

})

> db.pageviews.update({ _id : 'http://daum.net' }, { $inc : { hits : 1} }, { upsert:true });

WriteResult({

"nMatched" : 0,

"nUpserted" : 1,

"nModified" : 0,

"_id" : "http://daum.net"

})

> db.pageviews.update({ _id : 'http://naver.com' }, { $inc : { hits : 1} }, { upsert:true });

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.pageviews.update({ _id : 'http://daum.net' }, { $inc : { hits : 1} }, { upsert:true });

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.pageviews.update({ _id : 'http://daum.net' }, { $inc : { hits : 1} }, { upsert:true });

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.pageviews.find();

{ "_id" : "http://naver.com", "hits" : 2 }

{ "_id" : "http://daum.net", "hits" : 3 }

>

[addToSet]

> db.users.insert({

... _id : "A001", name : "홍길동",

... phones : { home : "02-2211-5678", office : "02-3429-1234" },

... email : [ "gdhong@hotmail.com", "gdhong@gmail.com" ]

... })

WriteResult({ "nInserted" : 1 })

> db.users.update({ _id:"A001" }, { $addToSet : { email : "gdhong1@daum.net" } }

)

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.users.update({ _id:"A001" }, { $addToSet : { email : "gdhong2@daum.net" } }

)

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.users.update({ _id:"A001" }, { $addToSet : { email : "gdhong3@daum.net" } }

)

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 1 })

> db.users.update({ _id:"A001" }, { $addToSet : { email : "gdhong3@daum.net" } }

)

WriteResult({ "nMatched" : 1, "nUpserted" : 0, "nModified" : 0 })

> db.users.find({ _id:"A001" })

{ "_id" : "A001", "name" : "홍길동", "phones" : { "home" : "02-2211-5678", "office" : "02-3429-1234" }, "email" : ["gdhong@hotmail.com", "gdhong@gmail.com", "gdhong1@daum.net", "gdhong2@daum.net", "gdhong3@daum.net" ] }

> db.users.find({ _id:"A001" }).pretty()

{

"_id" : "A001",

"name" : "홍길동",

"phones" : {

"home" : "02-2211-5678",

"office" : "02-3429-1234"

},

"email" : [

"gdhong@hotmail.com",

"gdhong@gmail.com",

"gdhong1@daum.net",

"gdhong2@daum.net",

"gdhong3@daum.net"

]

}

> db.scores.insert({ _id:1, kor : 80 })

WriteResult({ "nInserted" : 1 })

> db.scores.insert({ _id:2, kor : 90 })

WriteResult({ "nInserted" : 1 })

> db.scores.insert({ _id:3, kor : null })

WriteResult({ "nInserted" : 1 })

> db.scores.insert({ _id:4, eng : null })

WriteResult({ "nInserted" : 1 })

> db.scores.find()

{ "_id" : 1, "kor" : 80 }

{ "_id" : 2, "kor" : 90 }

{ "_id" : 3, "kor" : null }

{ "_id" : 4, "eng" : null }

> db.scores.find({ kor : null })

{ "_id" : 3, "kor" : null }

{ "_id" : 4, "eng" : null }

> db.scores.find({ eng : null })

{ "_id" : 1, "kor" : 80 }

{ "_id" : 2, "kor" : 90 }

{ "_id" : 3, "kor" : null }

{ "_id" : 4, "eng" : null }

필드가 없는것도 null로 인식

> db.scores.find({ eng : { $in : [ null ], $exists : true } });

{ "_id" : 4, "eng" : null }

[난이도高]

//도시 이름이 A또는 B로 시작하고 마지막이 C로 끝나는 것들

db.zipcodes.find({ city : /^(A|B)\w+C$/})

[MongoDB는 Javascript]

> db.serverBuildInfo()

{

"version" : "2.6.9",

"gitVersion" : "df313bc75aa94d192330cb92756fc486ea604e64",

"OpenSSLVersion" : "",

"sysInfo" : "Linux build20.nj1.10gen.cc 2.6.32-431.3.1.el6.x86_64 #1 SMP Fri Jan 3 21:39:27 UTC 2014 x86_64 BOOST_LIB_VERSION=1_49"

,

"loaderFlags" : "-fPIC -pthread -Wl,-z,now -rdynamic",

"compilerFlags" : "-Wnon-virtual-dtor -Woverloaded-virtual -fPIC -fno-strict-aliasing -ggdb -pthread -Wall -Wsign-compare -Wno-unkn

own-pragmas -Winvalid-pch -pipe -Werror -O3 -Wno-unused-function -Wno-deprecated-declarations -fno-builtin-memcmp",

"allocator" : "tcmalloc",

"versionArray" : [

2,

6,

9,

0

],

"javascriptEngine" : "V8",

"bits" : 64,

"debug" : false,

"maxBsonObjectSize" : 16777216,

"ok" : 1

}

>

> function add(x,y) { return x+y;}

> add(3,5)

8

위도,경도 메르카토르 도법

지구는 둥글다를 왜곡

삼각함수를 사용해서 보정

RDB에서는 인덱싱이 안됨

=> MongoDB에서는 가능

[Map / Reduce]

- map : 비정형 데이터를 집계가능한 형식으로 만드는 작업

- reduce : 집계

정형데이터에서는 reduce만 하면됨

map -> suffling (sort&merge) -> reduce

※ suffling 은 신경쓸필요없고, 자동으로 수행됨

[주의사항]

- map 결과가 단1건이면, shuffling, reduce를 수행하지 않음

☞ map 의 형식과 reduce의 형식이 다르기때문에

map결과와 reduce결과 형식을 일치하도록 작성

1. finalize 형식 정의 : A001 -> {avg:xxxx}

2. reduce결과형식 정의 : A001 -> {sum:xxx, count:xxx}

3. shuffling결과형식 정의 : A001 -> [{sum:90, count:1}, ... ]

4. map결과형식 정의 : A001 -> {sum:90, count:1}

db.words.drop()

db.words.insert({sentence : "peter Piper picked a peck of pickled peppers"})

db.words.insert({sentence : "A peck of pickled peppers Peter Piper picked"})

db.words.insert({sentence : "If Peter Piper picked a peck of Peppers"})

db.words.insert({sentence : "Where's the peck of pickled peppers Peter Piper picked"})

<<word count 예제>>

3. map : peter -> { count: 1 } <-- 처음만들때는 무조건 1

4. shuffling : peter -> [ { count: 1 }, ... ]

2. reduce : peter -> { count: 3 }

1. finalize : peter -> { count: 3 }

function m1() {

var arr = this.sentence.match(/\w+/g);

for (var i=0; i < arr.length;i++) {

emit(arr[i].toLowerCase(), { count:1 })

}

}

//peter -> [ { count: 1 }, ... ]

function r1(key, values) {

var result = { count: 0 };

for (var i=0; i < values.length;i++) {

result.count += values[i].count;

}

return result;

}

db.words.mapReduce(m1, r1, {

out : { replace : "words_count" }

})

db.words_count.find()

var str = "간장 공장 공장장은 '김'공장장이다!!";

var pat = /\w/g;

var pat = /[\wㄱ-힣]+/g;

arr = str.match(pat);

arr

\w : alphanumeric 한글자, 공백이나 특수문자는 아님

[\wㄱ-힣]+ : 한글포함이 반복됨

[결과]

var str = "간장 공장 공장장은 '김'공장장이다!!";

undefined

var pat = /[\wㄱ-힣]+/g;

undefined

arr = str.match(pat);

["간장", "공장", "공장장은", "김", "공장장이다"]

arr

["간장", "공장", "공장장은", "김", "공장장이다"]

집계가능한 용도로 모델링하는 것이 중요하다

[NASDAQ]

C:\mongodb\bin>mongorestore.exe -h s1 --port 27017 c:\dev\nasdaq_sample

2017-01-24T16:41:08.783+0900 Hotfix KB2731284 or later update is not installed, will zero-out data files

connected to: s1:27017

2017-01-24T16:41:08.929+0900 c:\dev\nasdaq_sample\nasdaq\stocks.bson

2017-01-24T16:41:08.929+0900 going into namespace [nasdaq.stocks]

2017-01-24T16:41:11.002+0900 Progress: 9092992/750052968 1% (bytes)

2017-01-24T16:41:14.251+0900 Progress: 19918261/750052968 2% (bytes)

2017-01-24T16:41:17.101+0900 Progress: 29909825/750052968 3% (bytes)

2017-01-24T16:41:20.003+0900 Progress: 42407251/750052968 5% (bytes)

2017-01-24T16:41:23.003+0900 Progress: 58681487/750052968 7% (bytes)

2017-01-24T16:41:26.086+0900 Progress: 63798371/750052968 8% (bytes)

2017-01-24T16:41:29.108+0900 Progress: 73152623/750052968 9% (bytes)

2017-01-24T16:41:32.005+0900 Progress: 79128372/750052968 10% (bytes)

2017-01-24T16:41:35.000+0900 Progress: 96903040/750052968 12% (bytes)

2017-01-24T16:41:38.002+0900 Progress: 115410417/750052968 15% (bytes)

2017-01-24T16:41:41.683+0900 Progress: 116454449/750052968 15% (bytes)

2017-01-24T16:41:44.001+0900 Progress: 130005048/750052968 17% (bytes)

2017-01-24T16:41:47.001+0900 Progress: 148398590/750052968 19% (bytes)

2017-01-24T16:41:50.123+0900 Progress: 159381342/750052968 21% (bytes)

2017-01-24T16:41:53.065+0900 Progress: 173415869/750052968 23% (bytes)

2017-01-24T16:42:00.215+0900 Progress: 175421083/750052968 23% (bytes)

2017-01-24T16:42:03.000+0900 Progress: 188876049/750052968 25% (bytes)

2017-01-24T16:42:06.000+0900 Progress: 204628648/750052968 27% (bytes)

2017-01-24T16:42:09.036+0900 Progress: 210788252/750052968 28% (bytes)

2017-01-24T16:42:12.002+0900 Progress: 222725060/750052968 29% (bytes)

2017-01-24T16:42:15.000+0900 Progress: 239431171/750052968 31% (bytes)

2017-01-24T16:42:18.001+0900 Progress: 253006817/750052968 33% (bytes)

2017-01-24T16:42:21.263+0900 Progress: 267103909/750052968 35% (bytes)

2017-01-24T16:42:24.014+0900 Progress: 272515559/750052968 36% (bytes)

2017-01-24T16:42:27.104+0900 Progress: 282074952/750052968 37% (bytes)

2017-01-24T16:42:30.000+0900 Progress: 286790464/750052968 38% (bytes)

2017-01-24T16:42:33.001+0900 Progress: 301513564/750052968 40% (bytes)

2017-01-24T16:42:36.021+0900 Progress: 305570408/750052968 40% (bytes)

2017-01-24T16:42:39.002+0900 Progress: 322243276/750052968 42% (bytes)

2017-01-24T16:42:42.001+0900 Progress: 340147269/750052968 45% (bytes)

2017-01-24T16:42:45.154+0900 Progress: 358229088/750052968 47% (bytes)

2017-01-24T16:42:48.001+0900 Progress: 367959593/750052968 49% (bytes)

2017-01-24T16:42:51.001+0900 Progress: 378383614/750052968 50% (bytes)

..................

2017-01-24T16:44:31.001+0900 Progress: 731941556/750052968 97% (bytes)

2017-01-24T16:44:34.002+0900 Progress: 749895846/750052968 99% (bytes)

4308303 objects found

2017-01-24T16:44:34.035+0900 Creating index: { key: { _id: 1 }, ns: "nasdaq.stocks", name: "_id_" }

2017-01-24T16:44:35.361+0900 c:\dev\nasdaq_sample\nasdaq\symbols.bson

2017-01-24T16:44:35.361+0900 going into namespace [nasdaq.symbols]

5960 objects found

2017-01-24T16:44:35.648+0900 Creating index: { key: { _id: 1 }, ns: "nasdaq.symbols", name: "_id_" }

> use nasdaq

switched to db nasdaq

> db.stocks.findOne()

{

"_id" : ObjectId("4d094f58c96767d7a0099d49"),

"exchange" : "NASDAQ",

"stock_symbol" : "AACC",

"date" : "2008-03-07",

"open" : 8.4,

"high" : 8.75,

"low" : 8.08,

"close" : 8.55,

"volume" : 275800,

"adj close" : 8.55

}

>

SELECT stock_symbol, AVG(close), MAX(close)

FROM stocks

WHERE stock_symbol LIKE 'GO%'

GROUP BY stock_symbol

3. map : "GOOG" -> { sum: this.close, count:1, max:this.close }

4. shuffling : "GOOG" -> [{ sum: xxx, count:xxx, max:xxx }, ... ]

2. reduce : "GOOG" -> { sum: xxx, count:xxx, max:xxx }

1. finalize : "GOOG" -> { avg : xxxx, max:xxxx }

function m2() {

emit(this.stock_symbol, { sum: this.close, count:1, max:this.close });

}

//"GOOG" -> [{ sum: xxx, count:xxx, max:xxx }, ... ]

function r2(key, values) {

var result = { sum:0, count:0, max:0 };

for (var i=0; i < values.length;i++) {

if (result.max < values[i].max) result.max = values[i].max;

result.sum += values[i].sum;

result.count += values[i].count;

}

return result;

}

db.stocks.createIndex({ stock_symbol:1 })

2017-01-24T16:57:10.639+0900 [conn2] build index on: nasdaq.stocks properties: { v: 1, key: { stock_symbol: 1.0 }, name: "stock_symbol_1", ns: "nasdaq.stocks" }

2017-01-24T16:57:10.657+0900 [conn2] building index using bulk method

2017-01-24T16:57:13.009+0900 [conn2] Index Build: 285900/4308303 6%

2017-01-24T16:57:16.000+0900 [conn2] Index Build: 1346300/4308303 31%

2017-01-24T16:57:22.649+0900 [conn2] Index Build: 2618300/4308303 60%

2017-01-24T16:57:25.000+0900 [conn2] Index Build: 3228300/4308303 74%

2017-01-24T16:57:28.000+0900 [conn2] Index Build: 3873300/4308303 89%

2017-01-24T16:57:41.000+0900 [conn2] Index: (2/3) BTree Bottom Up Progress: 3856900/4308303 89%

2017-01-24T16:57:42.370+0900 [conn2] done building bottom layer, going to commit

2017-01-24T16:57:57.544+0900 [PeriodicTaskRunner] task: DBConnectionPool-cleaner took: 334ms

2017-01-24T16:57:57.588+0900 [PeriodicTaskRunner] task: WriteBackManager::cleaner took: 22ms

2017-01-24T16:58:13.275+0900 [conn2] build index done. scanned 4308303 total records. 62.606 secs

use nasdaq

> db.avgmax.find()

{ "_id" : "GOAM", "value" : { "max" : 16.06, "avg" : 3.6048161574313795 } }

{ "_id" : "GOLD", "value" : { "max" : 55.65, "avg" : 20.228447909284228 } }

{ "_id" : "GOLF", "value" : { "max" : 11.49, "avg" : 7.4655319148936155 } }

{ "_id" : "GOOD", "value" : { "max" : 22.19, "avg" : 17.606393728222997 } }

{ "_id" : "GOODO", "value" : { "max" : 22.45, "avg" : 21.802142857142854 } }

{ "_id" : "GOODP", "value" : { "max" : 26.81, "avg" : 24.28254641909813 } }

{ "_id" : "GOOG", "value" : { "max" : 741.79, "avg" : 389.0802908277405 } }

{ "_id" : "GORX", "value" : { "max" : 7.69, "avg" : 3.0498910081744017 } }

[Aggregation]

> db.stocks.aggregate([{

... "$match" : { "stock_symbol" : /^GO/,

... "date" : { "$gte" : "2006-01-01", "$lte" : "2006-12-31" } }

... },{

... "$project" : { "stock_symbol":1, "close":1 }

... }, {

... "$group" : {

... "_id" : "$stock_symbol",

... "max" : { "$max" : "$close" },

... "avg" : { "$avg" : "$close" }

... }

... }, {

... "$sort" : { "stock_symbol" : 1 }

... }]);

{ "_id" : "GORX", "max" : 4.88, "avg" : 3.912828685258964 }

{ "_id" : "GOOG", "max" : 509.65, "avg" : 411.1852988047812 }

{ "_id" : "GOODP", "max" : 26.01, "avg" : 25.600874999999984 }

{ "_id" : "GOOD", "max" : 22.19, "avg" : 19.394780876494014 }

{ "_id" : "GOLF", "max" : 10.56, "avg" : 8.820661764705884 }

{ "_id" : "GOLD", "max" : 26.32, "avg" : 20.593386454183268 }

{ "_id" : "GOAM", "max" : 9.84, "avg" : 3.908924302788845 }

db.students.insert( { _id:1, student: "Richard Gere",

courses: ['Music', 'Korean', 'Mathematics'] });

db.students.insert( { _id:2, student: "Will Smith",

courses: ['English', 'Korean', 'Science', 'Music'] });

db.students.insert( { _id:3, student: "Barack Obama",

courses: ['Music', 'Theatre', 'Dance'] });

db.students.insert( { _id:4, student: "Mitt Romney",

courses: ['History', 'English', 'Science', 'Korean'] });

db.students.insert( { _id:5, student: "Tommy Lee Jones",

courses: ['Arts', 'Mathematics', 'Dance'] });

var start = new Date();

db.students.aggregate([

{

$project : { _id:0, s:"$student", cs : "$courses" }

},

{

$unwind : "$cs"

},

{

$group : {

_id: "$cs",

students : { $addToSet : "$s" }

}

}

])

var end = new Date();

print(end-start);

[GUI]

https://mongobooster.com/

요즘 뜨고있음

모니터링 도구 내장

[Java Driver : Jongo]

package com.multi.jongo.test;

import java.net.UnknownHostException;

import java.util.ArrayList;

import java.util.List;

import org.jongo.Jongo;

import org.jongo.MongoCollection;

import com.mongodb.DB;

import com.mongodb.MongoClient;

import com.multi.jongo.test.vo.Person;

import com.multi.jongo.test.vo.Score;

public class JongoClient {

public static void main(String[] args) throws UnknownHostException {

DB db = new MongoClient("s1:27017").getDB("test2");

Jongo jongo = new Jongo(db);

MongoCollection persons = jongo.getCollection("persons");

List<String> emails1 = new ArrayList<String>();

emails1.add("gdhong@gmail.com");

emails1.add("gdhong123@yahoo.com");

Person p1 = new Person("gdhong", "홍길동", new Score(100,70,60,90), emails1);

persons.insert(p1);

List<String> emails2 = new ArrayList<String>();

emails2.add("mrlee@hotmail.com");

emails2.add("mrlee121@gmail.com");

Person p2 = new Person("mrlee", "이몽룡", new Score(80,90,70,70), emails2);

persons.insert(p2);

System.out.println("test2 에 쓰기 완료!!");

}

}

Spring Mongo pdf 로 검색하면

- maven dependency 확인가능

'dev > NoSQL 모델링' 카테고리의 다른 글

| NoSql 모델링 기법 (0) | 2017.01.25 |

|---|---|

| Shard Cluster (1) | 2017.01.25 |

| Replica set (0) | 2017.01.25 |

| 3. Column Family Database (0) | 2017.01.24 |

| NO SQL을 선택하는 이유 (0) | 2017.01.23 |